LLM Benchmarks - Directory w/ AI Reviews

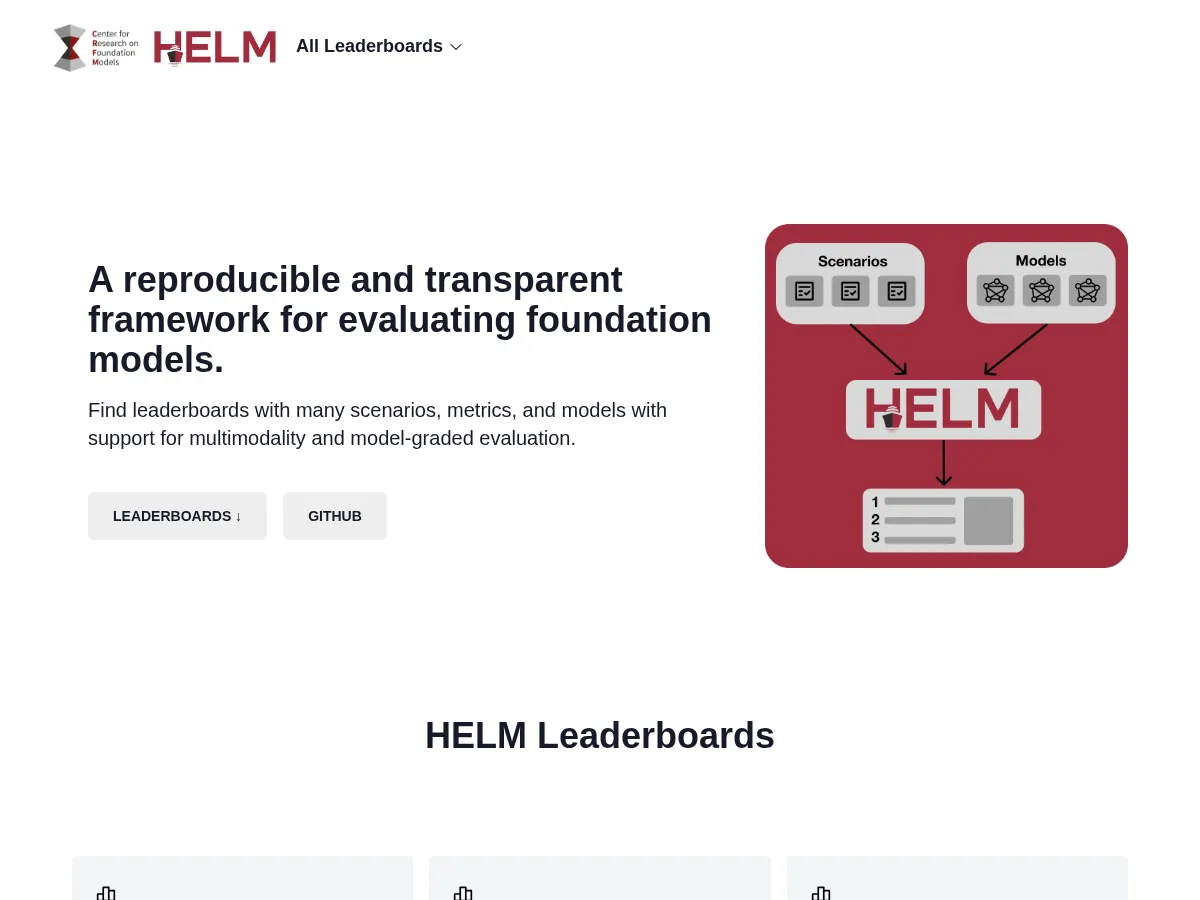

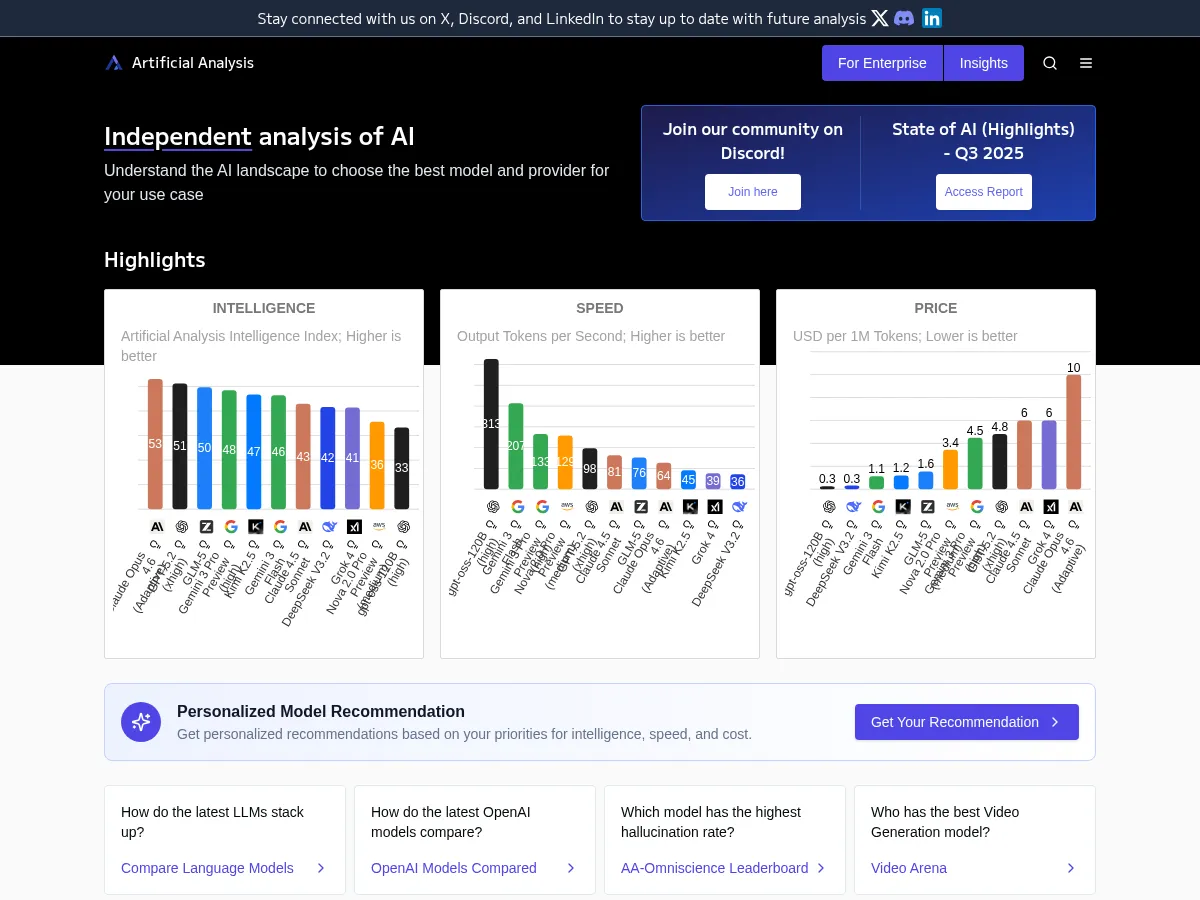

Choosing the right LLM for a task requires rigorous comparison across dimensions like reasoning, coding, multilingual ability, and cost. LMSYS Chatbot Arena uses crowdsourced human preference ratings to rank models on open-ended tasks. HELM provides standardized benchmark suites for academic and industry comparison, while the Hugging Face Open LLM Leaderboard tracks open-source model performance. Artificial Analysis adds infrastructure metrics like throughput and latency to the evaluation picture.

1

4.9

1

4.9

3

4.8

3

4.8

4

4.7

4

4.7

5

4.4

5

4.4