Open Source LLMs - Directory w/ AI Reviews

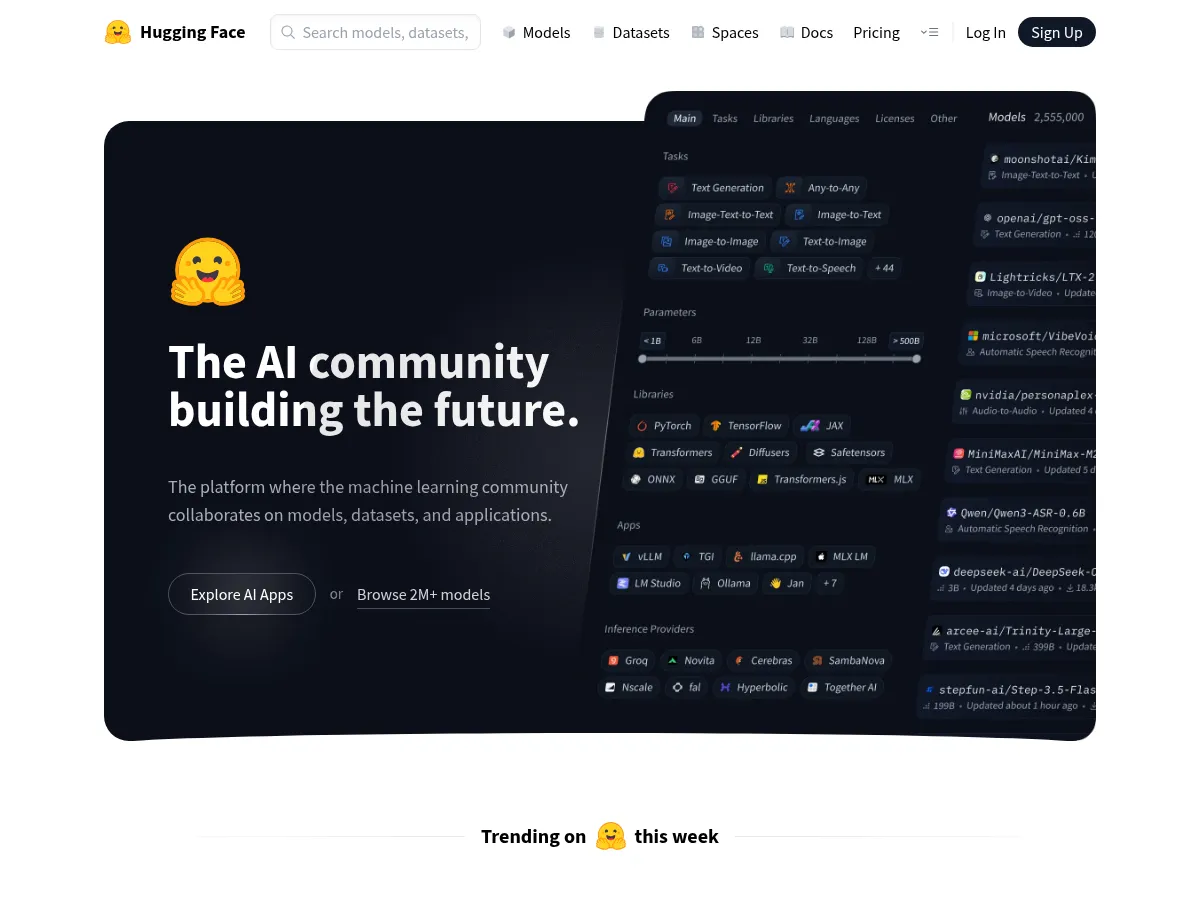

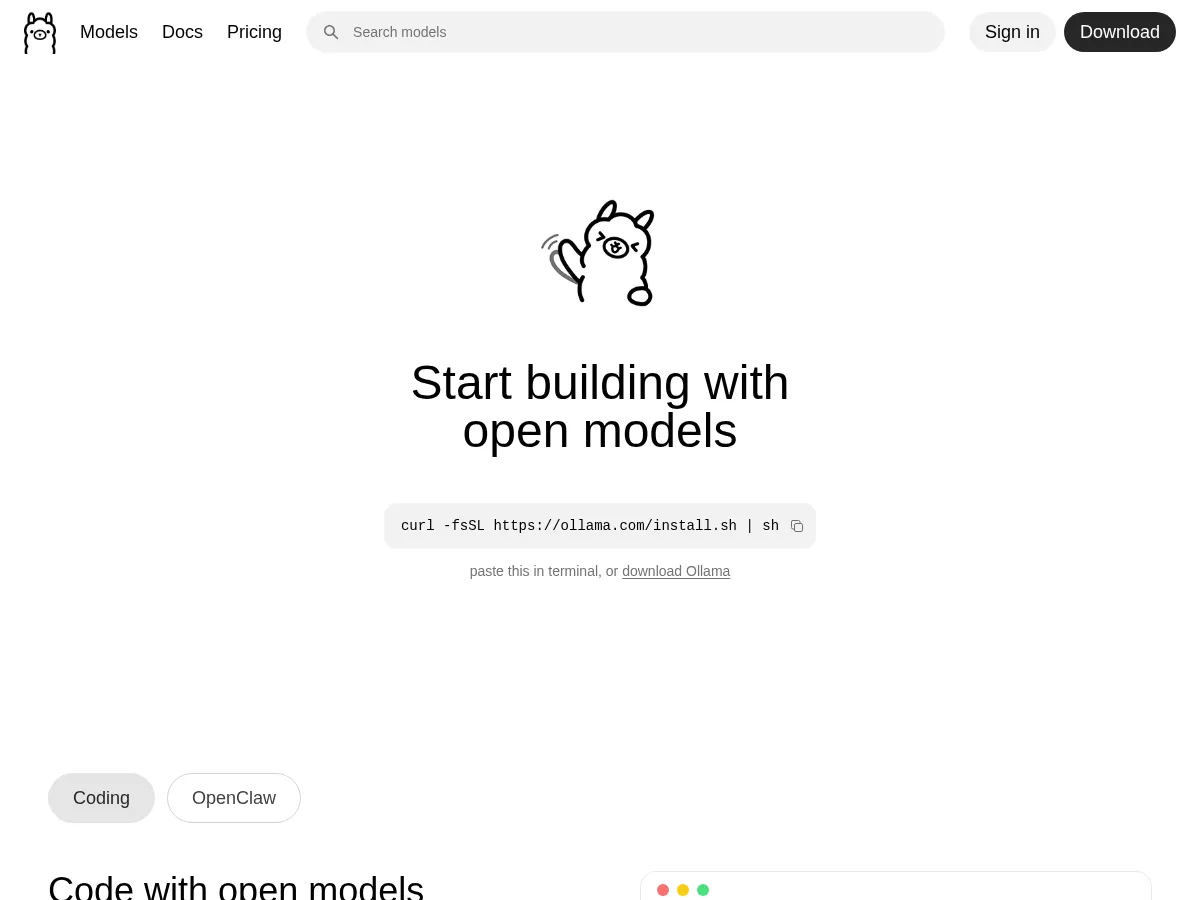

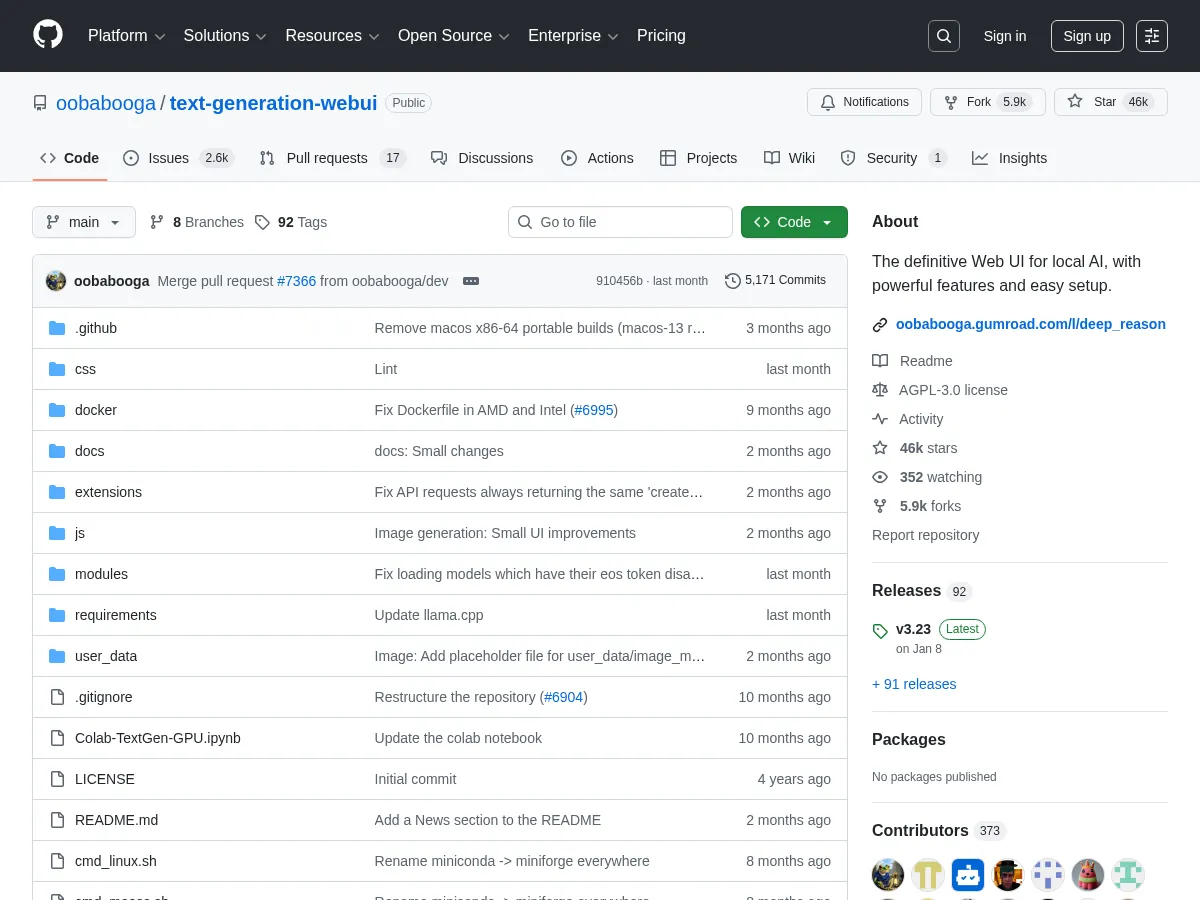

Open-source LLMs have democratized access to capable language models that can run on private infrastructure without API fees or data sharing. Llama 3 and Gemma 2 from Meta and Google have set new benchmarks for open-weight capability. Ollama makes running these models locally as simple as a single command, while Together AI and Groq provide cloud inference for teams that need open models at scale. Hugging Face hosts the open-source model ecosystem, and vLLM provides the high-throughput serving engine that powers many deployments.

1

4.9

1

4.9

2

4.8

2

4.8

3

4.8

3

4.8

4

4.8

4

4.8

5

4.7

5

4.7

6

4.7

6

4.7

7

4.6

7

4.6

8

4.5

8

4.5

9

4.5

9

4.5

10

4.4

10

4.4

11

4.3

11

4.3

12

4.0

12

4.0