AI Model Hosting - Directory w/ AI Reviews

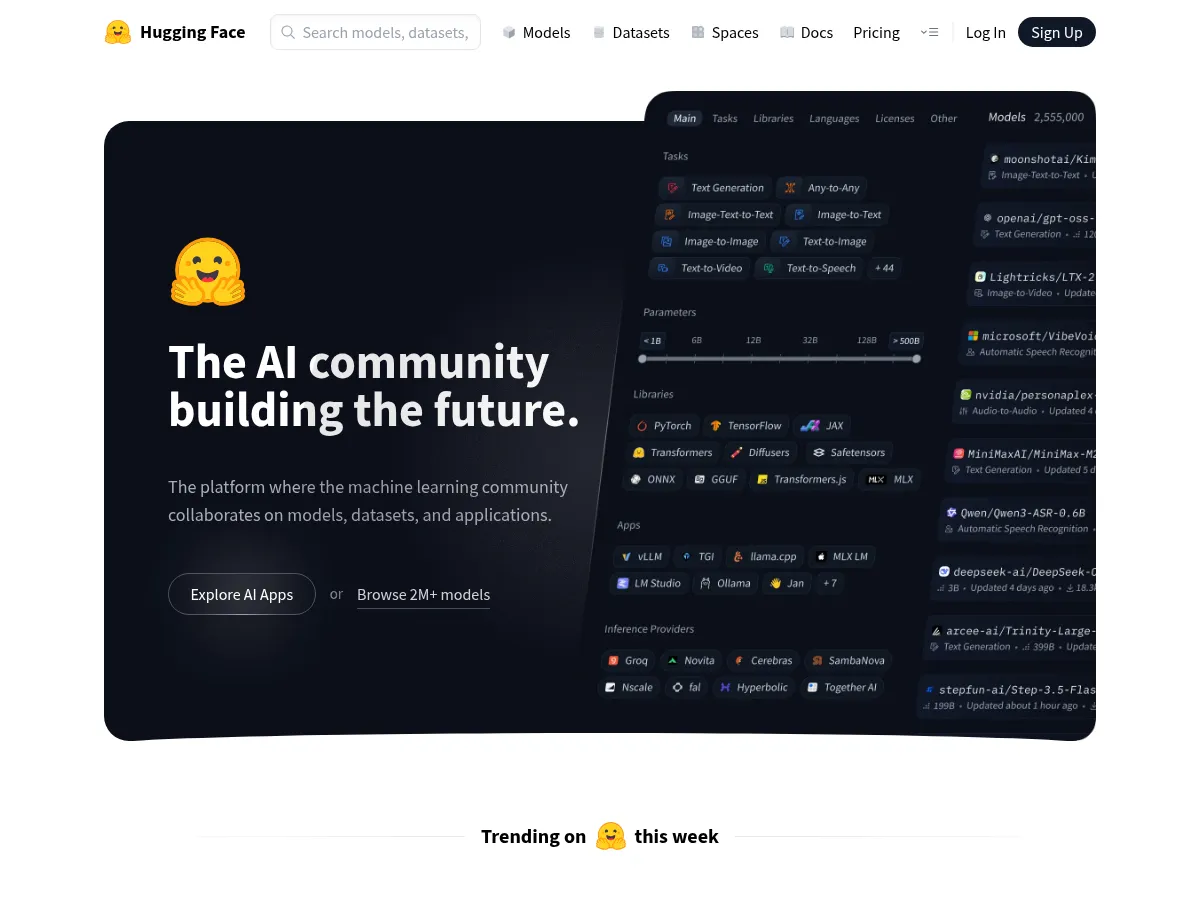

Running AI models in production requires infrastructure optimized for latency, throughput, and cost. Hugging Face's Inference Endpoints and Replicate let developers deploy any model behind a REST API in minutes. Ollama and Together AI make it easy to run open-weight models locally or in the cloud, while Groq's LPU inference chips deliver sub-100ms response times for real-time applications.

1

4.8

1

4.8

2

4.8

2

4.8

3

4.7

3

4.7

4

4.7

4

4.7

5

4.6

5

4.6

6

4.6

6

4.6

7

4.4

7

4.4

8

4.4

8

4.4

9

4.4

9

4.4

10

4.2

10

4.2

11

4.0

11

4.0